Living Archive

An AI performance experiment

Living Archive is a collaboration between Google Arts and Culture and Wayne McGregor, exploring Machine Learning use for a creative process

This project has three main components:

- A creative tool, created for Wayne Mc Gregor and his dancers

- A public experiment, showcasing Wayne’s archives in a new, engaging way

- A film by Ben Cullen Williams, with visuals created from predicted data

Getting the data

Wayne McGregor provided us his whole archive: Footage from live performance, music videos, interviews, behind the scenes, etc…

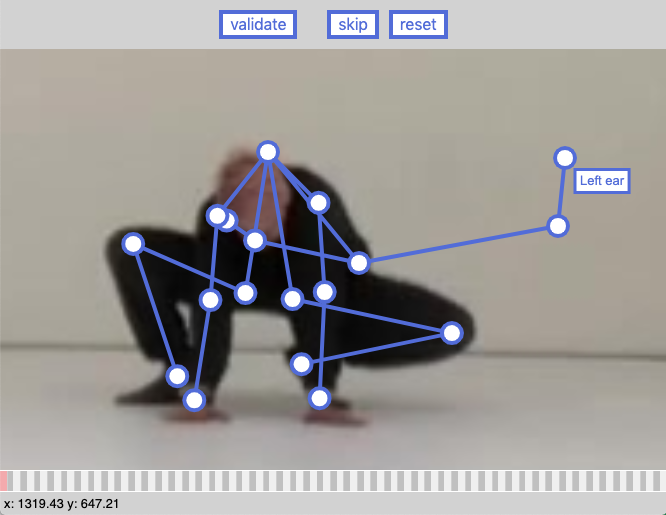

Running pose detection on the archive revealed that a lot of it was not suitable for training a predictive model: Low resolution, dark & stylised footage, edited footage, fast moving camera, etc…

Thankfully WMG later provided us good quality footage, and more importantly, labelled by individual dancer

All the footage (the initial archive, and the labelled ones) was then run though pose-detection software (detects people and their arms, feet, head, etc… in an image), cleaned up and “packaged” as a dataset, for use in a machine learning model

Numbers

- 103 archive video

- 30 single-dancer videos

- 57 hours of video

- 386 000 poses in final dataset

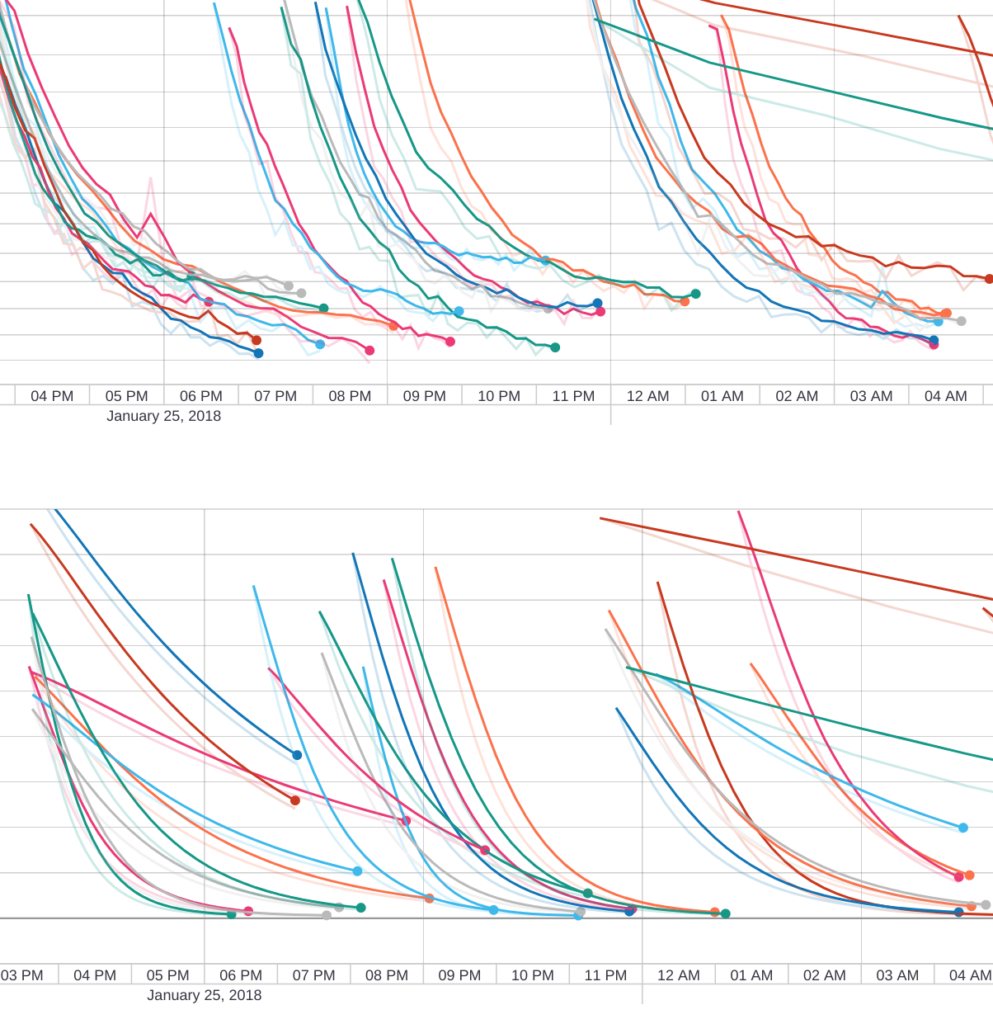

Making predictions

With that data, we need to train an algorithm to create ‘new’ dance movements

We settled on 3 methods to do that:

- LSTM: Long-short term memory learning model (the “real” machine learning method)

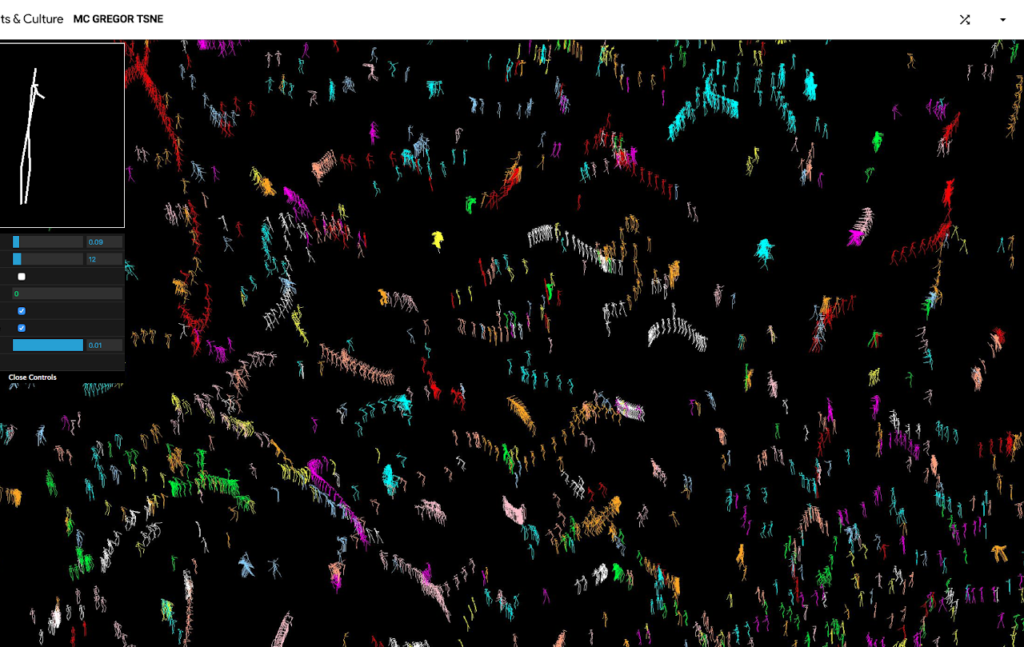

- TSNE: plot the poses from the dataset on a 2d space, draw a line in that space, select poses close to the line, interpolate

- Graph: same as TSNE, but with more dimensions

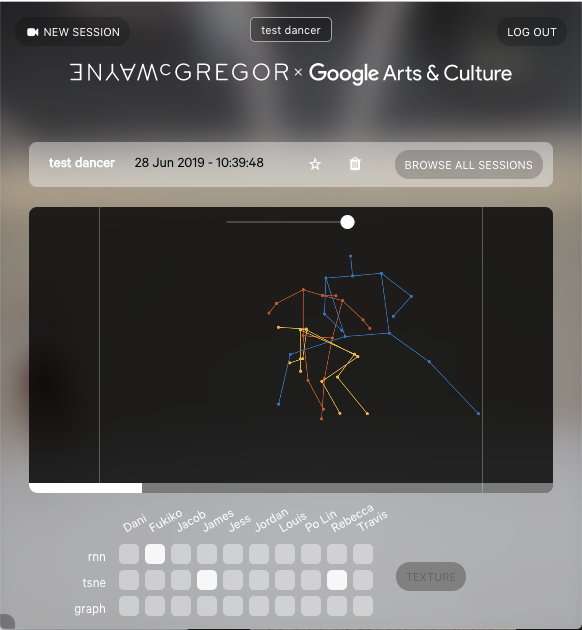

Choreography tool

we built a tool for Wayne McGregor and his dancers to easily request and use predictions:

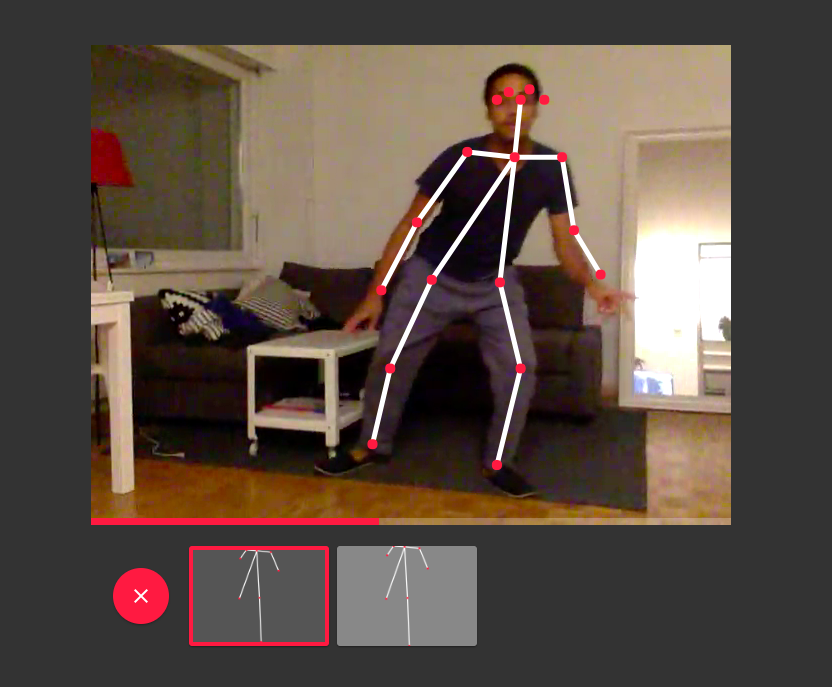

A dancer records a movement using the webcam. His position is detected on each frame then analysed, and the result is fed to each prediction model (one per dancer)

The results are displayed in the tool and can be combined to create more complex outputs

Seven days

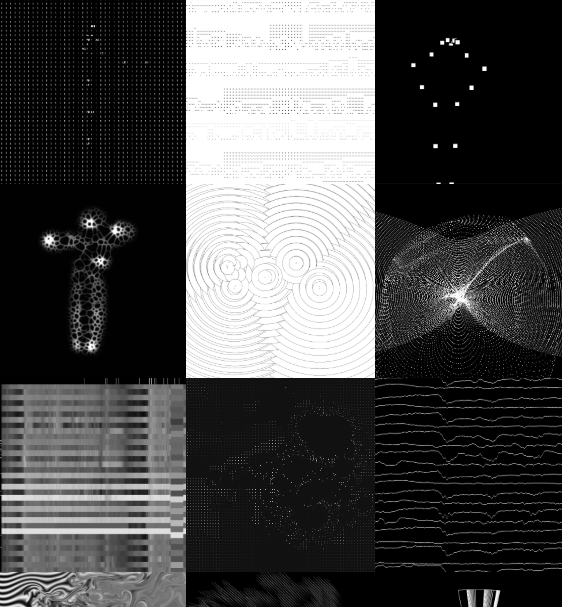

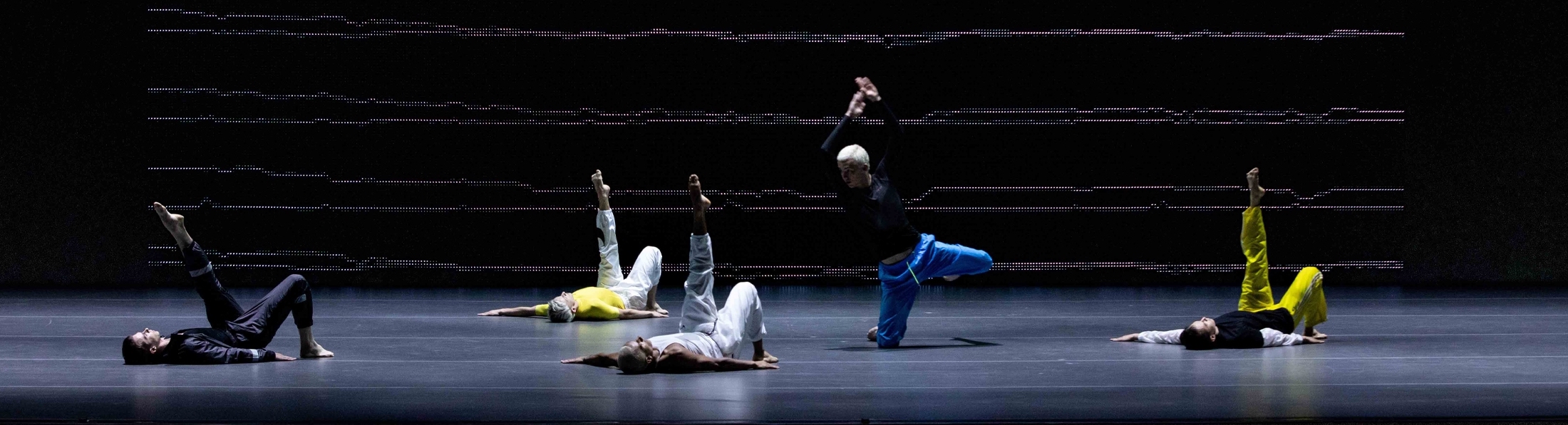

Seven days is the name of the film created by Ben Cullen Williams as a backdrop for the live stage show’s scenography

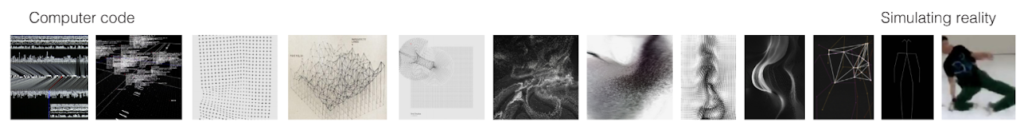

The concept of the film was to showcase a spectrum of visuals, from the most abstract (binary numbers) to the most concrete (actual filmed dancers)

The creative developpers at the lab created the raw visuals for the film, based on preditions generated by the choreography tool

Sharing the love

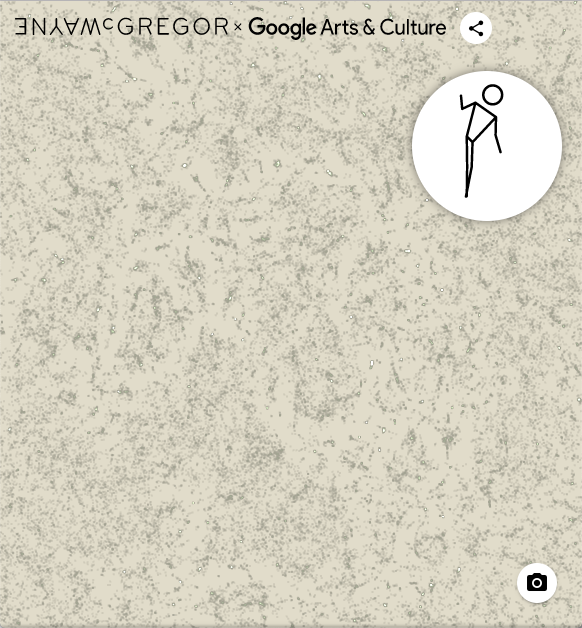

We wanted the general public to be able to explore Wayne’s archive as we did, in a new, engaging way

This experiment allows users to explore, curate and share the poses from Wayne’s archives, mapped using the T-SNE method (the more similar two poses are, the closer they are on the map)

Metadata is available for each pose, enabling users to learn more about its origin: Videos of the preformance (when we’re allowed to show it), behind the scenes, details on the performance, etc…

Users can also use their webcam to find a pose by making one themselves

Press & awards

The project has been featured and advertised in a variety of places, including

- bbc click

- wired

- Wane Mc Gregor’s website

- It’s nice that (video, article)

- Frame magazine

- FWA award

The use of predictive analytics doesn’t have to result in predictive work. In fact, algorithmically generated outcomes can present an aesthetic that is unexpected and off the beaten track

HOW PREDICTIVE ANALYTICS CAN CHANGE OUR NOTION OF BEAUTY – Frame Magazine

Gallery

People

The project was a collaboration between Studio Wayne McGregor and Google arts and culture lab:

- Bastien Girschig Machine learning model, development, project lead

- Gael Hugo Early UI experiments, PIx2Pix pose rendering

- damien henry Project management, ML mentor

- Simon Doury & Romain cazier Early tool UI

- Cyril Diagne Technical help (kubernetes and internal google tools)

- Mario Klingemann Graph prediction model

- Everyone at the lab Visuals for the Seven days film