Wimbi is a mixed-reality game, played with an Ipad and a table. It was created as my Media and Interaction design diploma project at ECAL.

Process

The obvious technical challenge here was detecting the position of the taps on the table. The rest (linking up the detection and the game, creating the game, etc… were secondary problems in my mind)

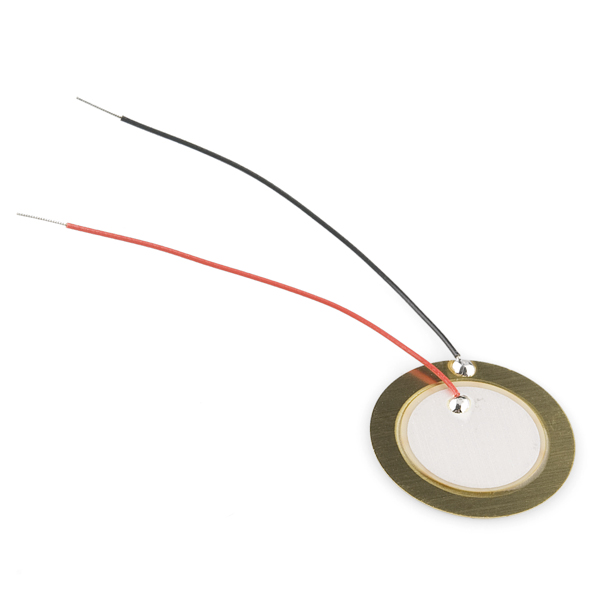

Step 1 – Piezzoelectric elements

These things are cheap. And they work quite well for detecting small vibrations, so I decided to use those: The theory was that, by detecting when the vibration was received, then computing the time difference between each, I could approximate the relative distance between the “tap” and each sensor.

This turned out to be quite tricky

I ran a small experiment, connecting a few piezzoelements to an oscilloscope, and confirming that there was a detectable time difference with the scale and materials I was working with. There was. I was happy

Next step was simply to use an Arduino to do the same thing as the oscilloscope (note-to-self: an Arduino is not an oscilloscope).

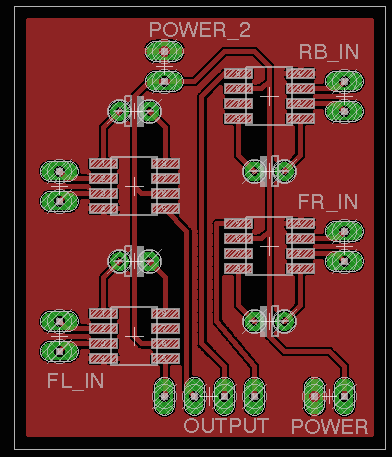

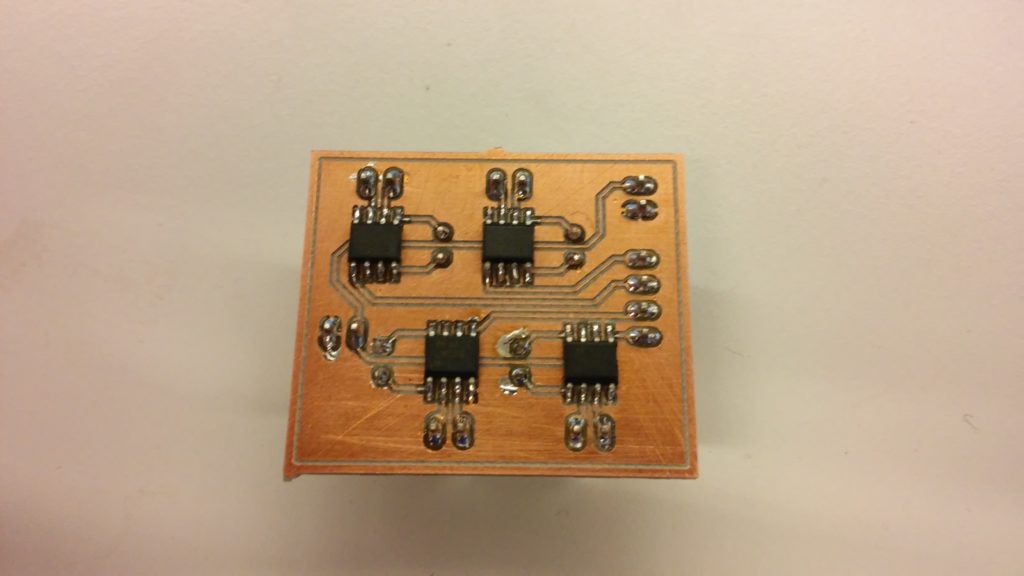

The fist issue came: the signal was too low. Not a problem, I’ll create a pretty little PCB with an amplifier for each sensor:

And the result was:

… disappointing. This would never work in time. On to the next solution!

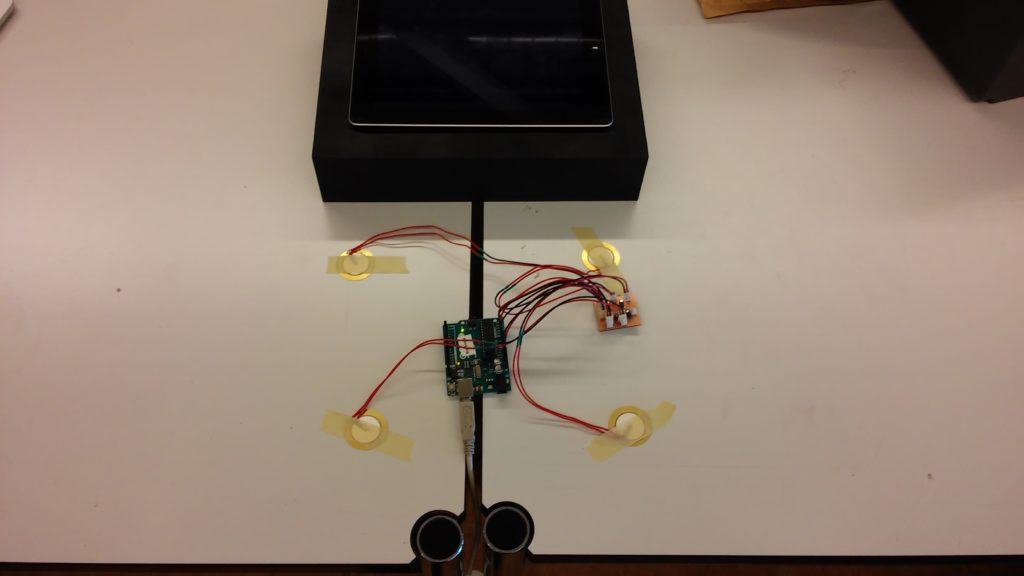

step 2 – Ultrasonic proximity sensor

A bit less “low-tech” than the previous ones, but now time is kind of running out and I need a solution. These will do just fine

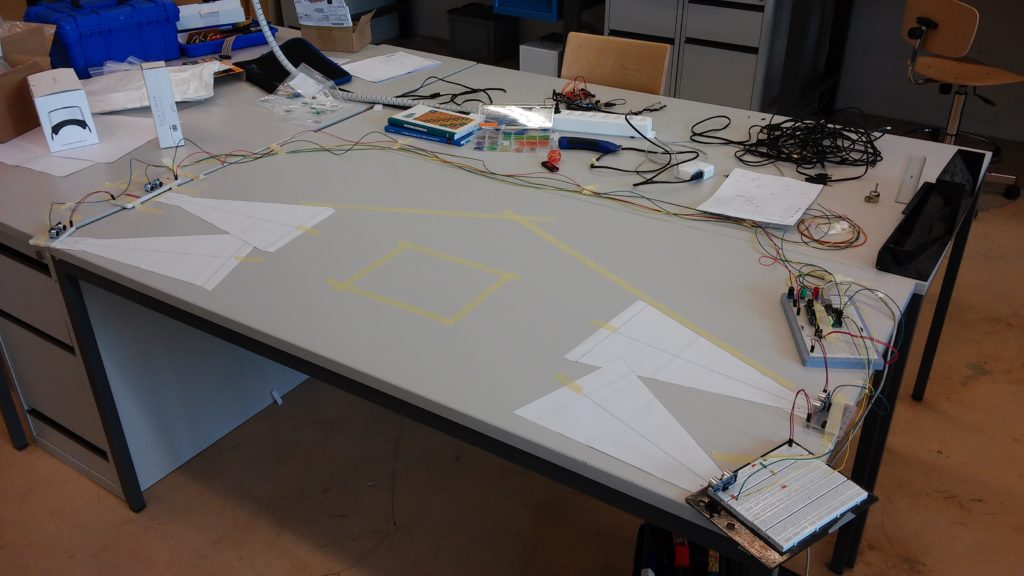

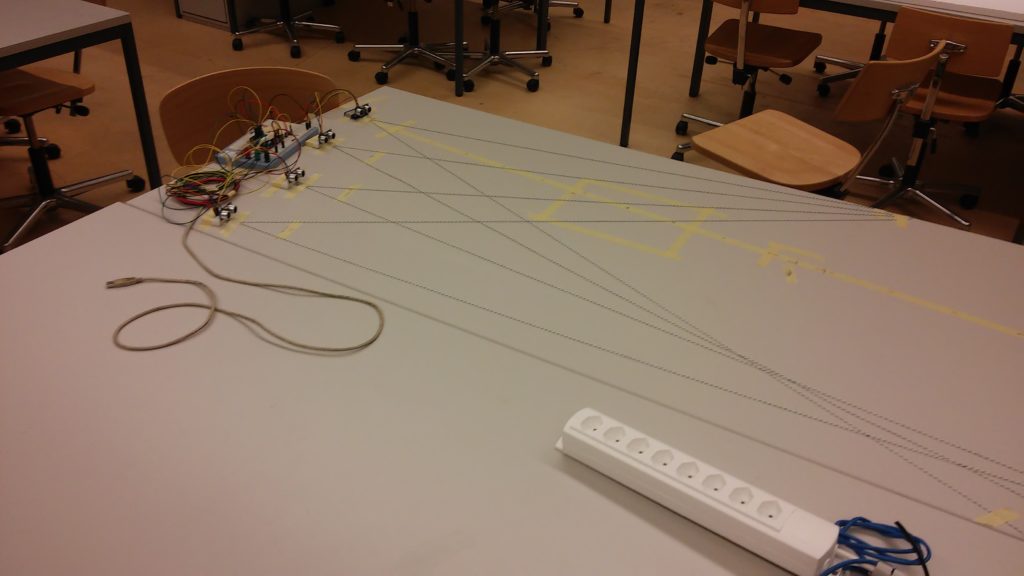

I connect a bunch of them to an arduino, write a bit of triangulation code, and… It actually works!

The tape rectangle on the table represents the theoretical position of the Ipad. Tapping on the corner of the tape rectangle should result in a wave being created in the corner of the ipad. And it does!

But there are still some issues: The setup is very brittle (sensors can’t be moved relative to each other, there are some interferences between the sensors, you have to tap the table quite had (there is still one piezzo listening to vibrations) but your hand can’t be in the way of the sensor, etc…

This is not good enough. Let go deeper (shallower ?) in the levels of abstraction

Step 3 – Why not a kinect ?

I know, right ?

Yes, those existed then; No I did not think about using one until that point (3 weeks before the deadline). So, I spent a few nights writing some code to map the kinect’s depth sensor data into a touch interface, and voila!

With that out of the way, I need to create a game

step 4 – Creating the game

The concept of the game is there, what I need is levels. Creating layers in code may be fun, it’s also very inefficient, so I created this cute little editor

And there we are! The game was well received by the people there, and went on to not be a viral success (maybe the fact it requires a kinect, an Ipad, and a laptop had something to do with it…)