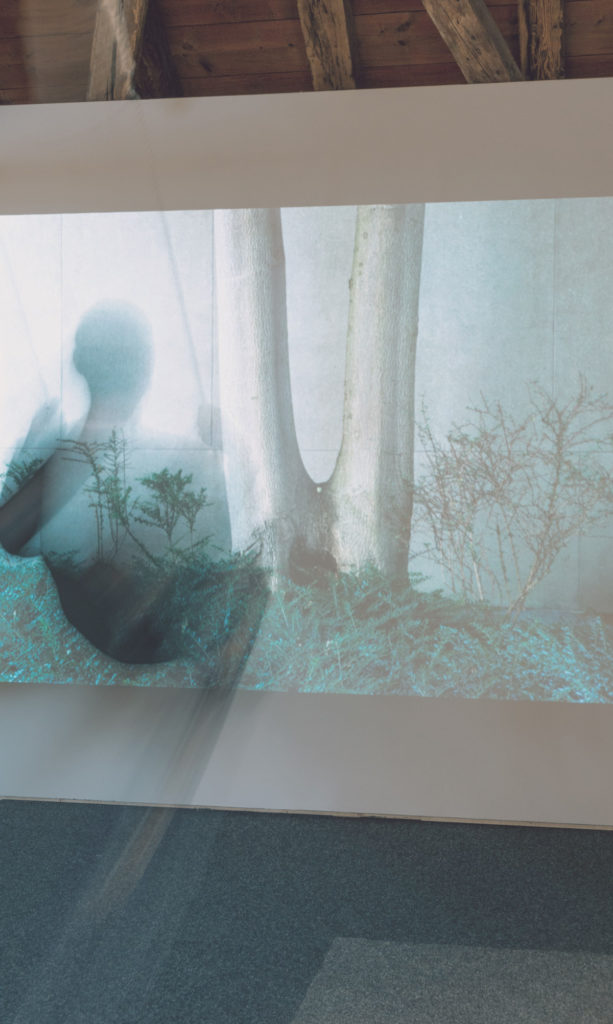

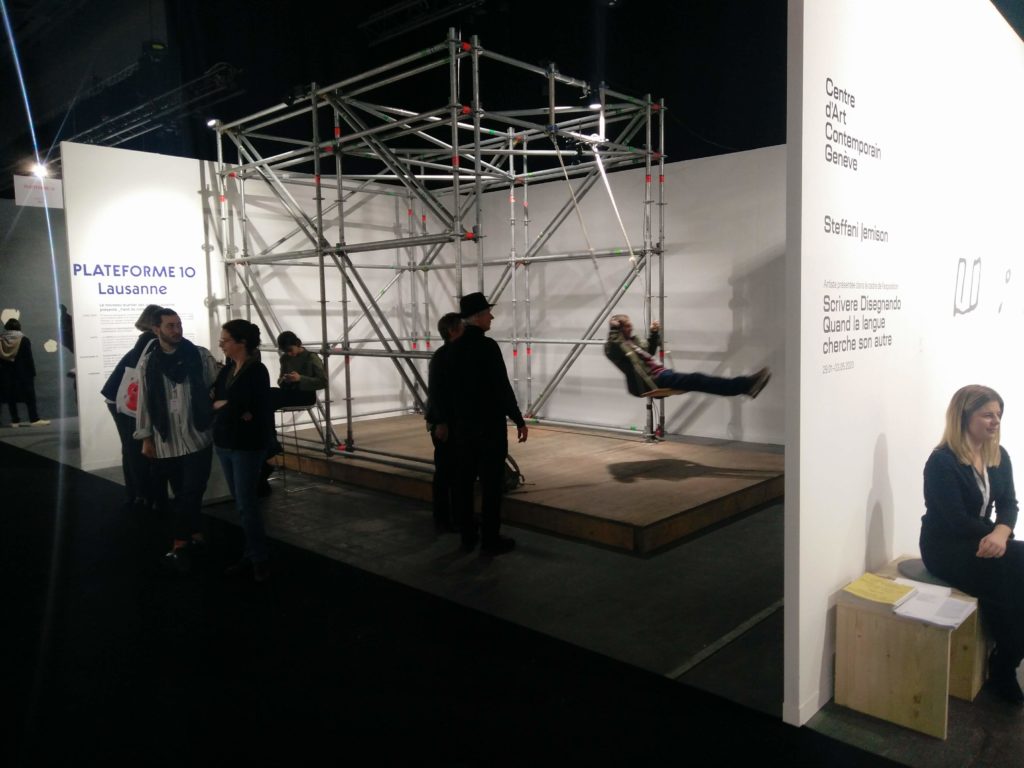

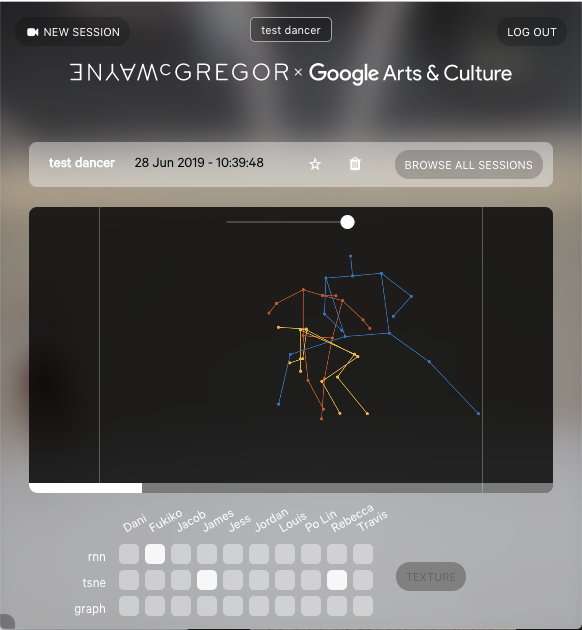

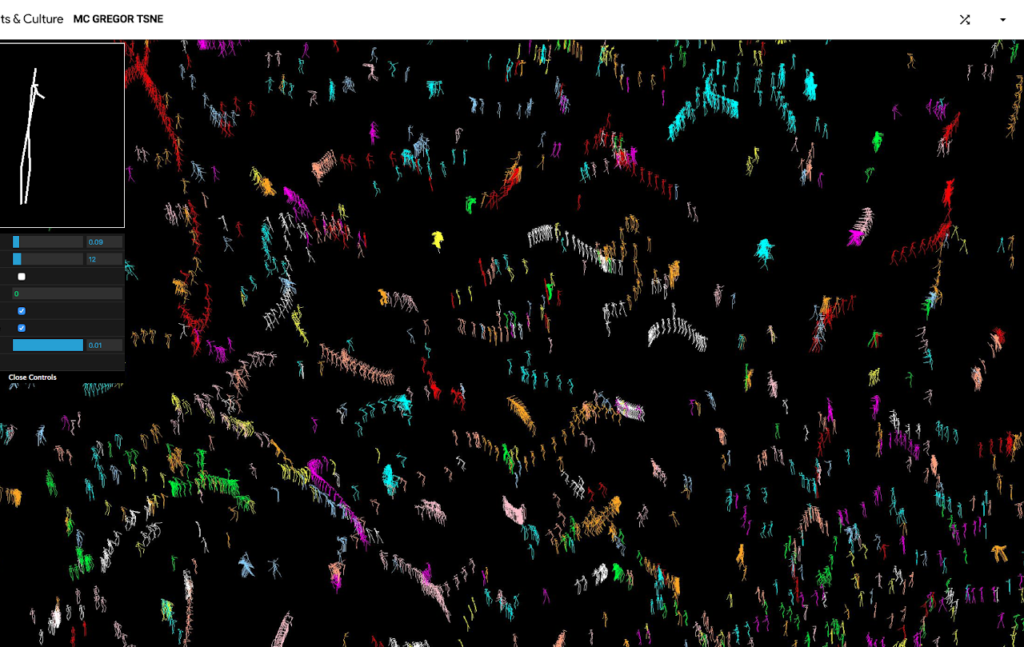

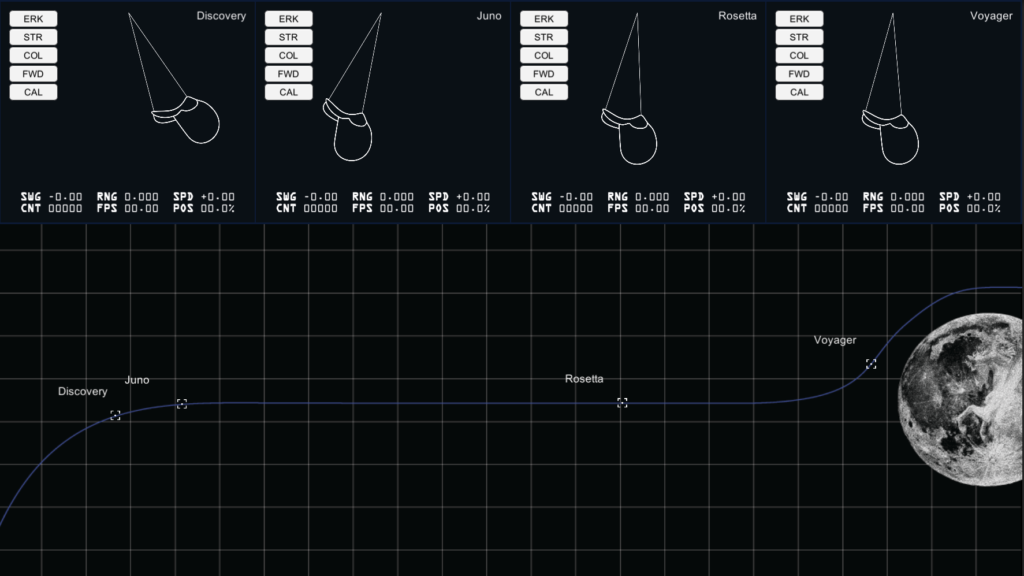

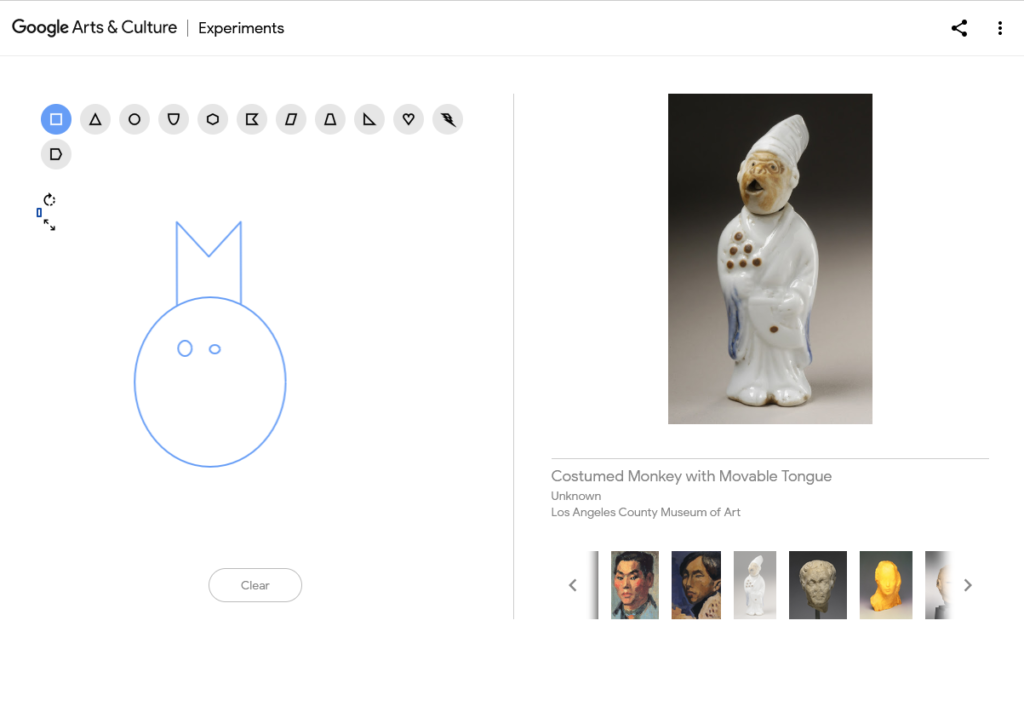

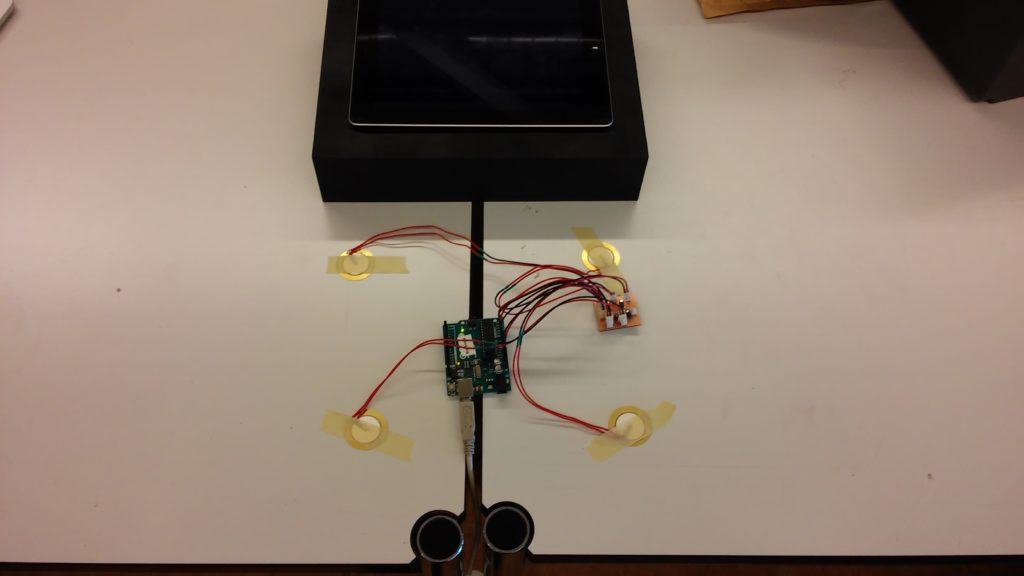

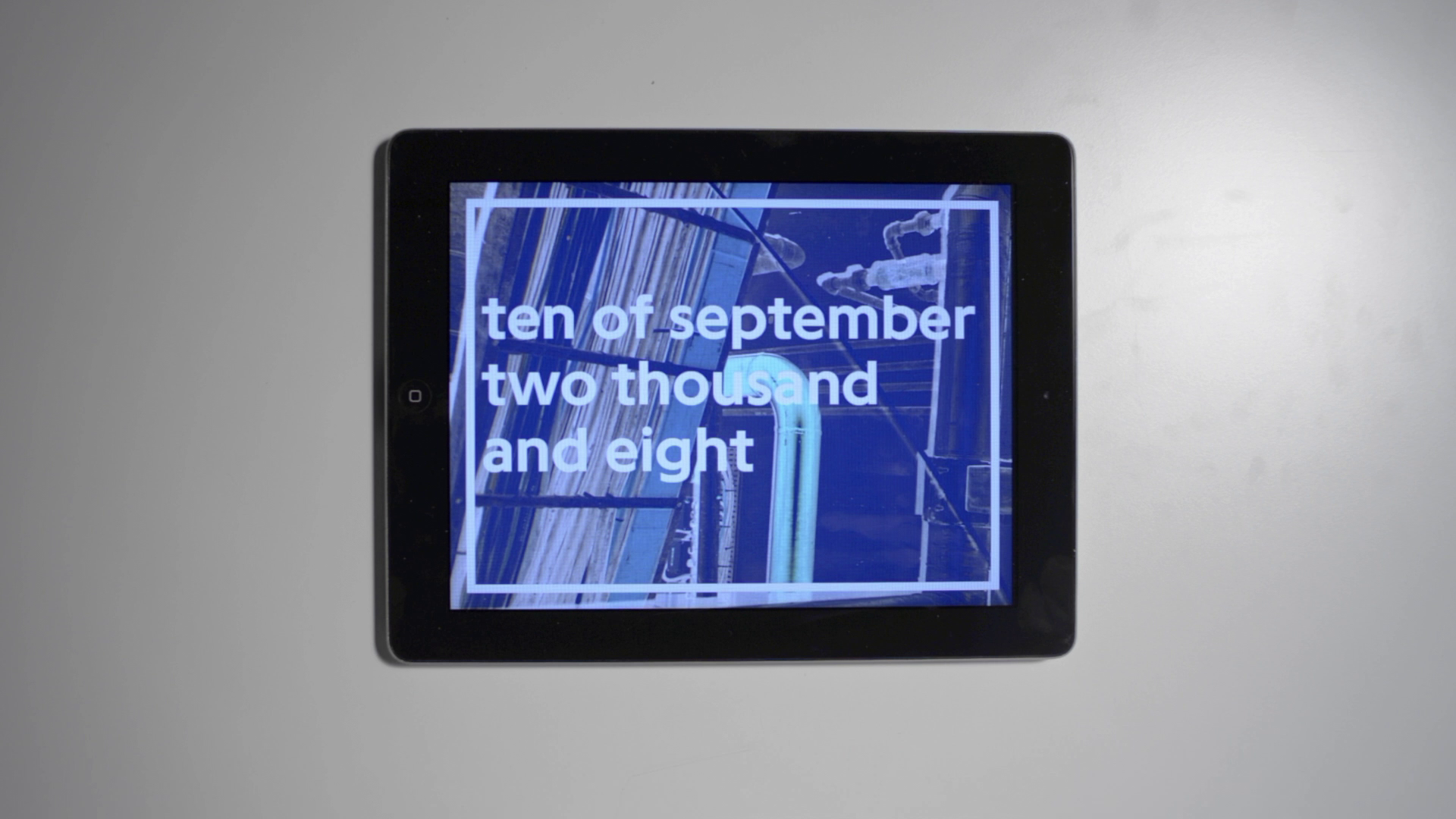

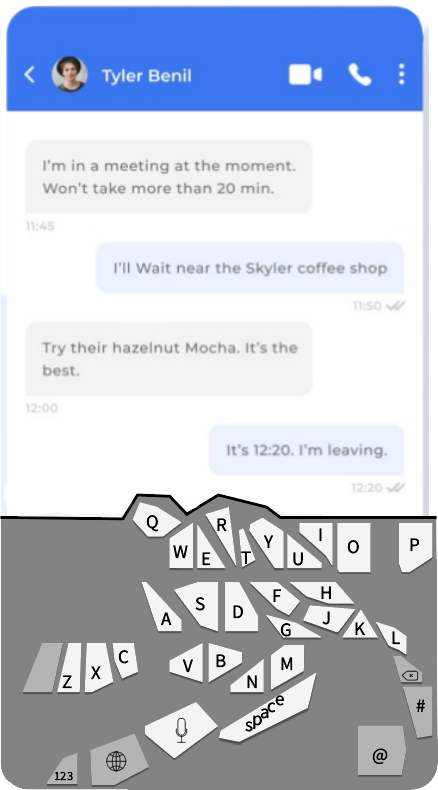

On the occasion of their retrospective exhibition at Casino Luxembourg, Raphael Siboni et Fabien Giraud (the unmanned) wanted to create a 360 video controlled by an “artificial intelligence”.

The idea was that to train a model to recognize objects from the artists previous movies, and use it to detect those objects in a 360 video filmed inside the exhibition. A simple program would then choose one of the detected objects and track it for a while, choose another one, etc…

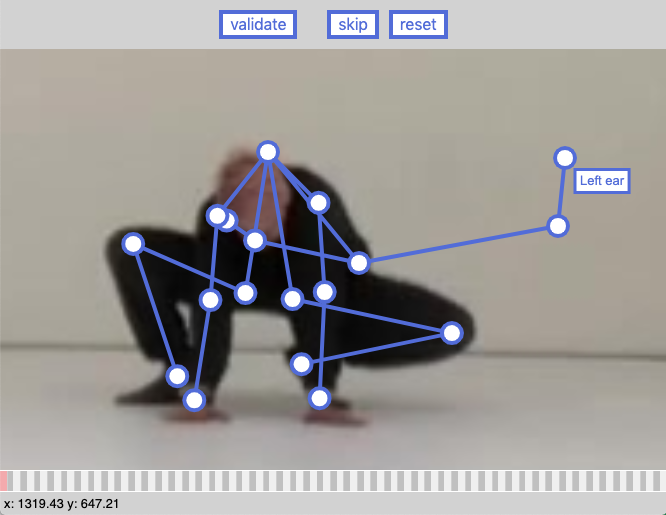

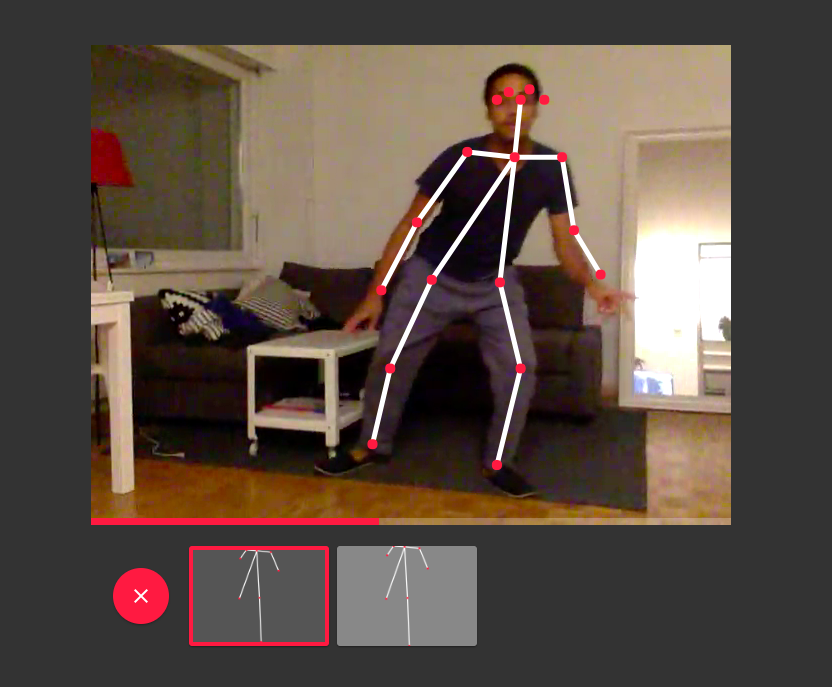

We ended up manually labeling objects (with a single category) then training a YOLOv5 model on those, which yielded pretty good results.

Story time !

Something that proved unexpectedly challenging was Smoothly animating the camera between different orientations: The playback had to be done through python + libvlc, which expects euler angles as input for the camera orientation (yaw, pitch and roll).

The “numpy-quaternion” library seemed like a good candidate: It had everything I needed, including the slerp feature I was looking for (to animate between two rotations). However, the library’s author had pretty strong opinions on euler angles:

[convert to euler angles]

Open Pandora’s Box If somebody is trying to make you use Euler angles, tell them no, and walk away, and go and tell your mum. You don’t want to use Euler angles. They are awful. Stay away. It’s one thing to convert from Euler angles to quaternions; at least you’re moving in the right direction. But to go the other way?! It’s just not right. Assumes the Euler angles correspond to the quaternion R via R = exp(alphaz/2) * exp(betay/2) * exp(gammaz/2) The angles are naturally in radians. NOTE: Before opening an issue reporting something “wrong” with this function, be sure to read all of the following page, especially* the very last section about opening issues or pull requests. https://github.com/moble/quaternion/wiki/Euler-angles-are-horrible

Mike Boyle – Documentation for the “convert to euler angles” function in his quaternion library.

I needed a specific kind of Euler angles, not the one he provides, so I had to look elsewhere.

So I turned to scipy’s spatial.transform.Rotation module. This library is pretty overkill for us but at least now I have all the euler conversion functions I can dream of.

When trying to use scipy’s Slerp I had trouble figuring out the correct syntax, and the documentation was not very helpful, so when I found a function called “geometric slerp”, I tried it and it seemed to work.

Turns out “geometric slerp” and “slerp” are completely different methods that behave quite similarly with simple examples (while trying to figure out what’s going on) but quite differently in other situations.

In the end, the Slerp function was the correct one, and I found a (rather dodgy) way of using it:

# Create a rotation object that contains the current and target rotation by converting them to quaternion and back:

rots = Rotation.from_quat([

current_rotation.as_quat(),

target_rotation.as_quat(),

])

# Create a Slerp instance, using the combined Rotation object from above

return Slerp([0,1], rots)The end !

Interesting Stuff I found during research

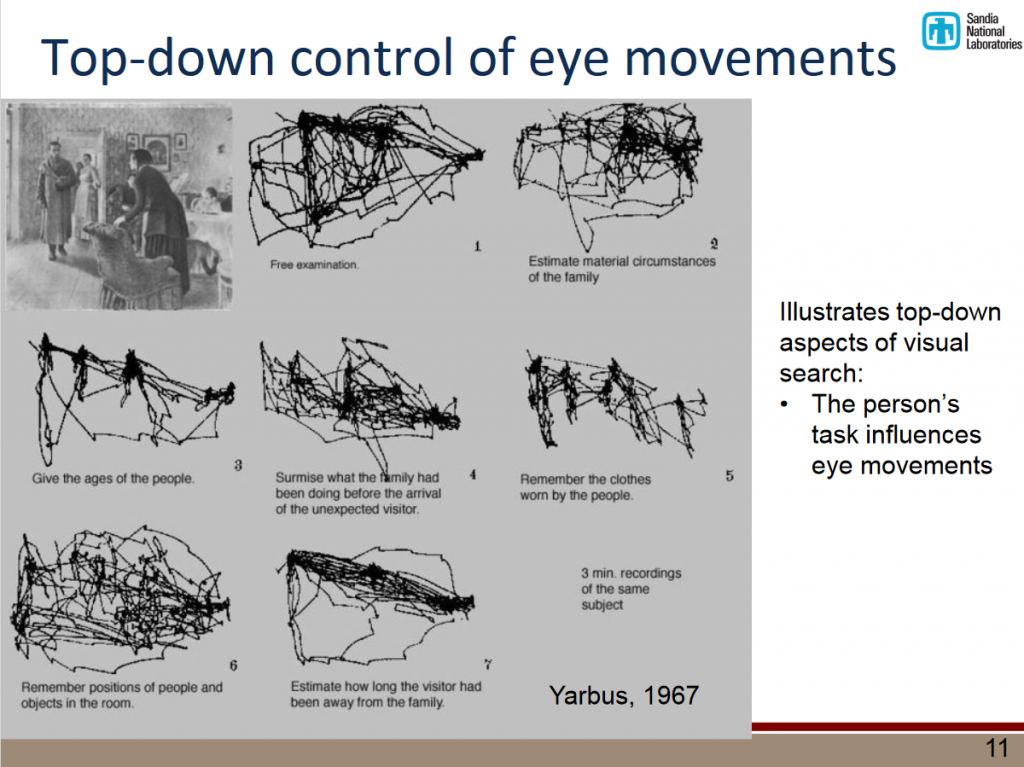

- Using Eye Tracking Metrics and Visual Saliency Maps to Assess Data Visualizations

explores how different parameters affect a person’s gaze (age, gender, task, etc..)